- Install apache spark without hadoop how to#

- Install apache spark without hadoop drivers#

- Install apache spark without hadoop windows 10#

Spark reduces the number of read/write cycles to disk and store intermediate data in memory, hence faster-processing speed. Speed Hadoop’s MapReduce model reads and writes from a disk, thus it slows down the processing speed. Real-time data processing It fails when it comes to real-time data processing. Java installation is one of the mandatory things in spark. Apache Spark is developed in Scala programming language and runs on the JVM. In This article, we will explore Apache Spark installation in a Standalone mode.

Install apache spark without hadoop drivers#

Spark comes with a graph computation library called GraphX. Hadoop YARN: In this mode, the drivers run inside the application’s master node and is handled by YARN on the Cluster. Graph Processing Algorithms like PageRank is used. RDD and various data storage models are used for fault tolerance.

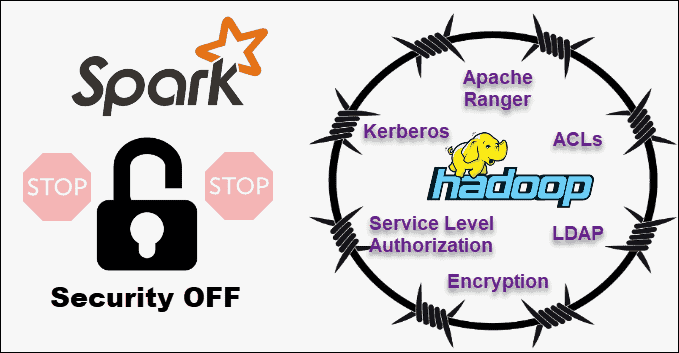

Hadoop vs Apache Spark Features Hadoop Apache Spark Data Processing Apache Hadoop provides batch processing Apache Spark provides both batch processing and stream processing Memory usage Hadoop is disk-bound Spark uses large amounts of RAM Security Better security features Its security is currently in its infancy Fault Tolerance Replication is used for fault tolerance. There are several libraries that operate on top of Spark Core, including Spark SQL, which allows you to run SQL-like commands on distributed data sets, MLLib for machine learning, GraphX for graph problems, and streaming which allows for the input of continually streaming log data. Spark is structured around Spark Core, the engine that drives the scheduling, optimizations, and RDD abstraction, as well as connects Spark to the correct filesystem (HDFS, S3, RDBMS, or Elasticsearch). Differences between Procedural and Object Oriented Programming.Differences between Black Box Testing vs White Box Testing.Class method vs Static method in Python.Introduction to Hadoop Distributed File System(HDFS).How Does Namenode Handles Datanode Failure in Hadoop Distributed File System?.Matrix Multiplication With 1 MapReduce Step.If you already have Java 8 and Python 3 installed, you can skip the first two steps.

Install apache spark without hadoop windows 10#

Spark 3.0 files are now extracted to F:\big-data\spark-3.0.0. Installing Apache Spark on Windows 10 may seem complicated to novice users, but this simple tutorial will have you up and running.

Install apache spark without hadoop how to#

How to Execute WordCount Program in MapReduce using Cloudera Distribution Hadoop(CDH) warning Your file name might be different from spark-3.0.0-bin-without-hadoop.tgz if you chose a package with pre-built Hadoop libs.How to find top-N records using MapReduce.MapReduce – Understanding With Real-Life Example.MapReduce Program – Finding The Average Age of Male and Female Died in Titanic Disaster.MapReduce Program – Weather Data Analysis For Analyzing Hot And Cold Days.Difference Between Hadoop and Apache Spark.Difference Between Hadoop 2.x vs Hadoop 3.x.Difference between Hadoop 1 and Hadoop 2.ISRO CS Syllabus for Scientist/Engineer Exam.ISRO CS Original Papers and Official Keys.GATE CS Original Papers and Official Keys.